- Researchers develop a new system to control flying drones with eyes.

- This includes a lightweight eye-tracking glasses and inertial measurement unit.

- The glasses provide a 2D gaze coordinates, which is further converted in 3D using a deep neural network.

The popularity of drones has increased over the past few years, and the trend will continue in the near future due to a variety of applications of these drones. Did you know that civilian drones vastly outnumber military drones, with more than a million sold by 2015?

Despite the ubiquity of these unmanned aerial vehicles, learning how to fly and control them is a complex task. Some of these machines come with an obstacle-avoidance system, but they don’t make the flying task easy enough.

A few institutes have come up with creative solutions like controlling drones with face, different body parts or even with the brain. Each solution has their own (dis)advantages, and they all provide multiple combinations of capability and convenience.

The more features you want in a control system, the more inconvenient it is. Building a system that has more capabilities and easy-to-use, is a challenging task, but researchers at New York University and the University of Pennsylvania have developed a lightweight gaze-tracking glasses that allows you to control a drone by simply directing your eye to the position where you want to steer it.

What’s New Here?

The drone control system does not depend on any external sensors, and instead of making the system drone-relative, researchers have designed it to follow a user-relative approach. For instance, when you are handling a drone with a conventional remote, it is drone relative. If you command it to go right, it will go right irrespective of your location. User-relative drone, on the other hand, sees things from your perspective and moves in orientation relative to you.

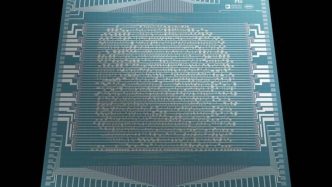

Credit: NYU

Credit: NYU

To make this works correctly, it’s important for the control system to have enough information of drone’s orientation and user’s location. Then only, it can localize the done relative to you without requiring sensors or GPS.

This has been made possible by a lightweight, wearable eye-tracking glasses named Tobii Pro Glass 2, which is equipped with a high-definition camera and inertial measurement unit. The camera continuously obverses the drone and measures how far it is from you, using a deep neural network that processes drone’s apparent size. The data is then combined with head orientation signal to estimate the position of drone relative to you.

Reference: IEEE Spectrum | University of Texas at Austin

The movement of your eyeball is tracked by wearable glasses. It converts the position (where you are looking) into a vector and transfers it to the drone, commanding it to fly there. In doing so, the most difficult part is to compute 3D navigation waypoint. You can imagine yourself, how hard it would be to determine whether the person is looking 30 feet away or 35 feet away, just by tracking his pupil.

The neural networks to compute the 3D navigation waypoint from 2D gaze coordinates (provided by wearable glasses) are trained on NVIDIA GeForce GTX 1080 Ti GPUs, using TensorFlow deep learning framework. The network is also trained to perform object detection.

Future and Applications

Researchers plan to further improve the system by reducing the noise generated by wearable glasses, which will offer more control over depth (3D waypoint). Integrating interactions modes like gestures, vocal or visual mode can add new capabilities or even deal with depth issues.

Read: DARPA Will Use Laser Light Source To Power Small Aircraft On The Fly

Eventually, it will allow amateur users to effectively and safely fly drones in dense areas. It could even allow users to fly more than one drone simultaneously. Researchers believe that this method can be applied to a wide range of scenarios like inspection and can be utilized by people with disabilities that influence their mobility.